Sit Straight!

A posture classifier that blurs out Youtube videos if users don't sit straight. Made to make users conscious of bad postures they take when there is no active interaction with the app.

How it works?

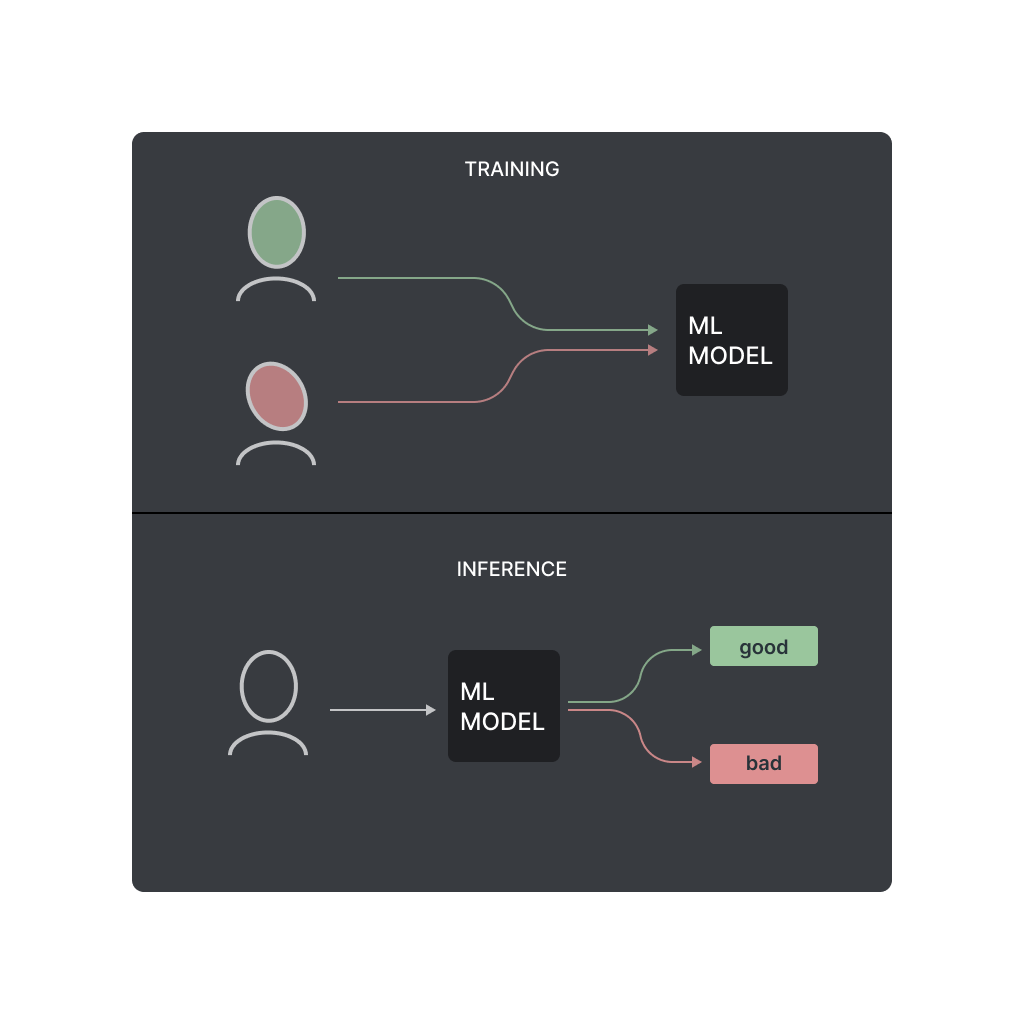

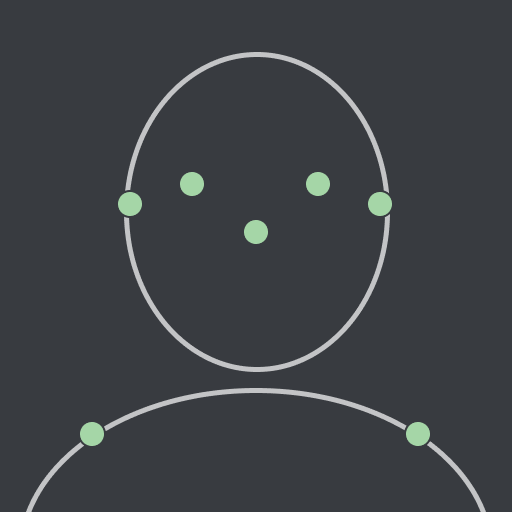

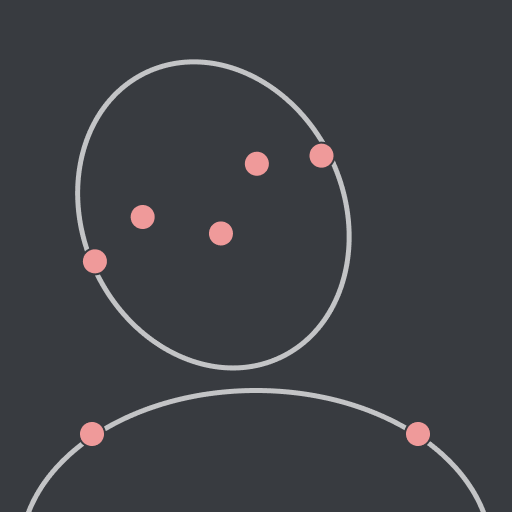

A machine learning algorithm has been trained to know what good & bad sitting postures look like. Using the device webcam postures are tracked in a 2D space to see if users are slouching or sitting upright.

Regular webcams can’t detect depth so the algorithm is limited to tracking users postures in a 2D plane. If you move closer or farther from the screen the app won’t give accurate results.

good postures

bad postures

Privacy

As user input, the app asks users for constant camera access which lets users to potentially give up a lot of their data. This concern was addressed while designing this app.

The algorithm runs locally on the browser & none of your data is tracked. The algorithm in question can identify different body parts but it doesn’t know if you are indoors or outdoors or sitting on your bed.

How it was made

Hi, I’m Atharva, the creator behind this web app. ML backed classification apps are a black box impossible to decipher. In an attempt to make the app features & limitations more accessible, I have documented my motivations & design process.

You can check out the first version of the app.